How AI is changing decision authority in data-driven organizations

AI is changing how teams operate, make decisions, and identify the right talent

Add bookmark

The conversation around artificial intelligence (AI) and employment still leans on a simple question: will machines replace people? That framing misses what is already happening.

The deeper shift is not whether jobs disappear, but where judgment sits, how responsibility is distributed, and who remains accountable once AI is introduced.

Employment volume may change slightly, but the structure of work itself is undergoing a bigger change. Tasks are shifting, decision boundaries are moving, and systems that once relied on human judgment are being reorganized around data pipelines and automated workflows.

AI adoption is reallocating work, not eliminating it

What began as experimentation with AI has moved into operational layers. Teams operate inside environments where AI models and automated decision points are already embedded.

AI adoption forces a level of process formalization that many organizations previously avoided. Manual workflows can survive on tacit knowledge, inconsistent approvals, and undocumented exceptions because people compensate in real time. Once AI enters the flow, those gaps become operational liabilities. Systems require cleaner inputs, clearer rules, defined ownership, and explicit escalation paths.

Layoffs attributed to AI and automation make headlines, yet most cases reveal a more complex pattern. Organizations introduce AI assistants, reduce operational overhead, and then reassign or restructure teams. Some roles disappear. Others emerge around integration, oversight, and system support.

AI systems now absorb repetitive execution, which exposes inefficiencies that were previously hidden inside manual workflows. Layers of coordination, duplicated responsibilities, and inflated workflows are no longer masked by human effort.

Once those inefficiencies surface, organizations redesign processes. Automation does not eliminate work. It reveals structural problems that trigger redesign. In that sense, effective AI adoption often functions less as a labor event and more as a workflow and governance audit.

Productivity gains are real, but uneven

The strongest productivity gains usually appear in template-driven work, routine synthesis, and repetitive production steps. In those areas, AI-assisted workflows can shorten cycle times and reduce the time required to move from input to draft, recommendation, or action.

However, the productivity picture changes as complexity rises. Time saved on straightforward tasks is often offset by time spent checking assumptions, tracing data dependencies, and correcting mistakes introduced upstream.

Architectural design, business logic, and context-sensitive decisions still depend on human reasoning. Models assist, but they do not replace understanding.

For organizations, that separation is operationally important. It affects where automation is worth deploying, where human review must remain in place, and how productivity should be measured. Local speed improvements do not automatically become enterprise productivity if they increase rework or decision risk.

Roles are expanding beyond their original boundaries

Role boundaries are beginning to dissolve. Work is moving away from clearly defined functions and into overlapping areas where data quality, tooling, and decisions interact. Isolated tasks are becoming rare. Work increasingly depends on actions that connect multiple systems and teams.

As a result, demand is being reshaped. Roles tied to integration, operational reliability, and decision support gain importance. Specialists now work across multiple systems and teams, which also makes clear communication essential to keeping work moving.

Engineers who can integrate models into real workflows and take on responsibilities related to reliability become more valuable than those who only build isolated components. Analysts are judged not only by outputs, but by how they interpret results within context and identify weak signals. Their role is moving closer to influencing decisions. Security specialists must now account for the behavior of AI agents, not only human users.

Systems can generate outputs at scale, but they cannot always guarantee correctness, relevance, or safety. That is why decision quality becomes the differentiator.

Hiring systems are entering an unstable phase

One of the least discussed consequences of AI adoption is its impact on hiring itself.

HR screening pipelines often rely on automated filtering systems that prioritize pattern matching over deeper indicators of capability. At the same time, candidates use AI tools to generate resumes, optimize written responses, and simulate interviews.

The result is a system where automation interacts with automation. Recruiters receive polished profiles that reflect optimization rather than skills. Automated systems pass candidates that match patterns.

Strong candidates can be filtered out due to mismatched phrasing, mixed domain experience, or even factors such as race and gender. Weaker candidates can advance because their materials are structured in ways that scoring systems favor.

Historical hiring data becomes less reliable under these conditions. Past success profiles were built around workflows that AI is already reshaping. As role boundaries shift and task composition changes, candidate evaluation becomes harder rather than easier.

For data-driven organizations, this turns hiring into a signal quality problem because the indicators used to infer future performance are becoming less stable.

Role demand is shifting faster than organizations can adjust

Predictions of widespread job loss have not materialized. The market is adjusting through redistribution. Hiring slows in some areas while demand rises in others. The disruption appears less as uniform job destruction and more as market friction, where demand shifts faster than role definitions, compensation logic, and hiring signals can adapt.

Lower complexity roles usually face pressure first. Tasks built around clear instructions and repetitive execution are the easiest to automate or compress. At the same time, demand for positions that require coordination, interpretation, oversight, and system-level thinking is growing.

Organizations can receive large volumes of applicants and still struggle to fill these roles because the central issue is not just the quantity of candidates. It is the widening mismatch between emerging work requirements and the way capability is identified, developed, and rewarded.

What this means for organizations

Several practical implications follow from these shifts.

Hiring processes need to be redesigned. Relying solely on automated filtering is not sufficient. Evaluation must incorporate deeper signals of capability.

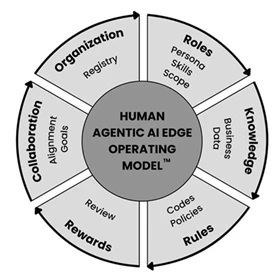

Workforce planning becomes more complex. Traditional role definitions lose relevance. Teams must be structured around functions that span multiple domains, with clearer ownership across workflows that combine human and machine inputs.

Skill development also changes direction. Instead of focusing on isolated expertise, organizations need individuals who can operate across systems, understand dependencies, and make informed decisions under uncertainty.

In many cases, internal redeployment and cross-functional development become more valuable than searching externally for perfect role matches. This often requires a sustained commitment to targeted training and education, ranging from specialized AI certifications to broader programs such as a DBA in business intelligence.

Performance measurement must change as well. In AI-enabled workflows, output volume and speed no longer capture where value or risk actually sit. Organizations need better visibility into validation efforts, exception handling, and decision quality.

Final thoughts: A system in transition

AI is not removing the need for people. It is changing what people are responsible for. Routine execution declines in importance. Context, judgment, and coordination become central. Hiring becomes less predictable. Roles grow less rigid. Systems become more interconnected. It is a transition toward a different kind of complexity and the next stage of work, one where understanding the system matters more than operating within it.