Statistical Process Control, The Alpha and Omega of Six Sigma Part 1: The Origin Story

Add bookmarkThis is the first in a four-part series on Statistical Process Control (SPC), which is the foundation for Six Sigma, providing the best known method for capturing the Voice of the Process and using data to manage a process. Organizations that practice Statistical Process Control as part of a comprehensive quality management system will identify required Six Sigma Projects and Kaizen events more readily, complete them more quickly and not only hold the gains but continue to improve after a Six Sigma project is completed. Identifying stability in a process enables capability studies, DPMO calculation and baselining; without stability, most of our other statistical tests on process data are suspect. In the series, we will explore the history of Statistical Process Control, some of its most common tools and concepts, and specific Six Sigma applications.

Statistical Process Control History

Seeds for Statistical Process Control, and for the Quality Movement and Six Sigma, were sewn in the farm fields of England; the academic halls of Paris, Goettingen, Cambridge (England and Massachusetts) and Princeton; the steel mills of New York and Pennsylvania; auto manufacturing plants in Michigan (and later Tokyo and Kyoto); and telephone equipment manufacturing plants of New Jersey and the Midwest. For while Ford and Taylor were developing scientific methods for reducing work to measurable components, Fisher and Hotelling were building on the statistical theories of Galton, LaPlace and Gauss and beginning to build statistics into a coherent set of methods for providing truly scientific measurements.

This groundwork set the stage for an innovator in the right place and the right time. The most technologically-advanced equipment being commercialized at the time was all being developed in the telephone industry. American Telephone and Telegraph (AT&T) hired the cream of the engineering crop and gave them state-of-the-art laboratories and a great deal of creative freedom. Walter Shewhart, a doctor of physics, was hired by Western Electric, the manufacturing unit for AT&T, to "study the carbon microphone and to develop experimental techniques for measuring its properties."1 Shewhart, who had studied with Fisher in England, was extremely interested in statistical methods—methods then new to engineering applications—and he began to look for more ways to incorporate them into the manufacture of telephone components.

Because of his understanding of variation and his interest in studying the causes of variation, Shewhart soon realized that although there is variation in everything, there are limits to the day-to-day, random variation observed in most processes, and that these limits could be derived statistically. In 1924, he produced the first process control chart, which set the limits of chance variability according to statistical guidelines. The control chart was a brilliant innovation for management, because it indicated when management action could be taken and which types of action would be effective. These control charts are now used across numerous industries from health care to hospitality, from automaking to chipmaking; tracking everything from laser welder performance characteristics to oxygen uptake in asthma patients, from customer satisfaction scores to quarterly results.

Figure 1 depicts a modern control chart. It’s a fairly simple tool: a time-series plot of the measure of interest, with centerlines (averages), and upper and lower control limits calculated from the data. By looking both for points outside the control limits and for non-random patterns within the control limits, managers can separate the common cause variation (inherent in the producing system) from assignable cause variation arising from emergent factors. In effect, the control chart articulates the "Voice of the Process," capturing the background noise in a way that makes it easy to discern signals when they happen.

Figure 1—Control Chart

The other message of the control chart is that if we do want to improve the process—if we need the average higher or lower, and want to reduce the variation—we have to make fundamental changes to the process. If we need smaller or larger mean diameters or less variation, we now know that we have to make some changes to the process to get it working better. This idea, that we can set limits for predicting the behavior of processes, was the foundation of the modern quality movement.

Two of Shewhart’s protêgês at Western Electric, W. Edwards Deming and Joseph Juran, took his work further. They (along with Shewhart and others) were involved in a series of lectures that set standards enabling the vast wartime production machine in the United States during World War II. Those who attended these lectures later became the American Society for Quality Control, now the American Society for Quality (ASQ).

Throughout most of the period between 1945 and 1972, U.S. manufacturing enjoyed unprecedented market domination, driven largely by the fact that it possessed the only industrial base left intact after the war. American manufacturers were driven by quantity, not quality. They could sell virtually everything they made. Many wartime quality initiatives lost favor after production lines were retooled for peacetime production. Quality was relegated to two primary functions: supplier quality and quality assurance. Control charting was largely a lost art in the United States.

Not so in Japan, though. Japan had to rebuild from the ground up. They were assisted by the United States under General MacArthur, tasked with helping rebuild the Japanese economy. Deming and Juran were both invited to lecture and consult as part of this effort. Their influence was profound; to this day, the most prestigious quality award in Japan is the Deming Prize. Many Japanese companies began using control charts and studying customer needs. Noriaki Kano developed a more sophisticated model for customer satisfaction and Genichi Taguchi published a paper that "[led] unavoidably to a new definition of World Class Quality—"On Target With Minimum Variance"2 being on target, continually reducing variation.

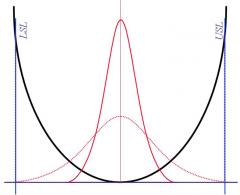

The Taguchi Loss Function is depicted in figure 2. The vertical lines represent the upper and lower specification limits (USL, LSL), set by the customer. The two distribution curves represent output from processes in statistical control. The wider one (dashed line) has its mean output on target, and most of its out put will be within the specification limits, meeting customer demand. The narrower (solid line) curve is also on target, but is using about half of the specified tolerance (conceptual Six Sigma). Much more of the output is closer to the target. Taguchi’s assertion was that there is some loss (here represented as a quadratic, U-shaped function), which is very low near the target, but its magnitude accelerates as the output moves toward the specification limits.

Figure 2—Taguchi Loss Function

In the decades after the war, Japanese manufacturers—using techniques taught to them by Deming and others—began cutting into American market dominance. Nikon and Canon supplanted American and German cameras; Sony and Panasonic replaced Zenith and RCA in consumer electronics. The largest shock came in the early 1970s, when the Oil Crisis caused American auto buyers to look for smaller, more fuel-efficient cars. They found Toyota, and when they did, they made another discovery: these cars were not only less expensive, they were much more reliable and the levels of quality were considerably higher than those of American cars. This sent a shockwave through one of America’s largest and most profitable industries, a shockwave that became a crisis.

In 1979 while researching for an NBC White Paper program called "If Japan Can, Why Can’t We?" Claire Crawford-Mason met W. Edwards Deming. She ended up prominently featuring him in the program, and the next morning, Deming’s phone began ringing and didn’t stop until he died some 14 years later. Ford, and later GM, hired Deming. He brought in Statistical Process Control experts and systems thinkers to help them turn their quality and productivity around. In addition, he re-introduced American industries to statistical methods. Millions of engineers, managers, and others attended 3-day public seminars. Membership in ASQC increased exponentially. The quality movement had been reborn.

1 Schultz, L. E., 2994. Profiles in Quality. White Plains, NY: Quality Resources.

2 Wheeler, D. J., and Chambers, D. S. (1992). Understanding Statistical Process Control, 2nd Ed. Knoxville, TN: SPC Press, Inc.

Continue to part 2 of this series.